Background

After two long years of working remotely, I had the big pleasure of visiting my fantastic colleugues in NYC at CORE studio Thornton Tomasetti for the annual AEC Tech event. AEC Tech is THE event for computational designers, AEC software developers and 3D hackers to get the latest news in the field and to connect with like-minded. This year was the 10th anniversary, and it’s truly amazing to see the community that’s been built around it over the years. The event is composed of workshops, symposium and a hackathon, which can be attended both remotely and in person.

Introducing configAR

For the hackathon, I teamed up with Ben Fortunato, Elcin Ertugrul, Alice Huang, Val Tzvetkov, Luke Gehron, Knut Tjensvoll, Rolando Villena, Atishay Lahri, Gokul Gupta and built a prototype of a system we call configAR.

The idea with configAR is simple: by connecting augmented reality with the super powers of parametric design and Grasshopper3d, we made it possible to visualize configurable designs in the real world using nothing else but your phone. It works like this:

- Launch the configAR iOS app in your phone and activate the camera.

- Define a floor surface to be populated by clicking on points in your camera.

- Click ”Generate” and enjoy automatically generated furniture layouts placed in AR!

The concept of configAR described in three steps: define a surface in AR, generate geometric content following the surface using grasshopper and funally stream the results back to AR.

Behind the scenes the floor surface is sent to a backend running Grasshopper headlessly which generates an optimized layout given the input. The geometric output is then mapped to your camera matching the points defined.

Demo

Below a screen recording demonstrates the functionality of configAR.

Demo of configAR: define the surface using AR and get geometric content generated from Grasshopper back.

Note that in the demo above we place a ceiling installation in the scene. The ceiling installation is generated from a Grasshopper script given the input polyline. However, because of the abstraction layer (which will be explained below), the Grasshopper definition generating the output can be pretty much anything, as long as the interface is the same: A polyline in, and geometry out.

The interface between the iOS app and the backend. The grasshopper definition generating the output can be pretty much anything, as long as the interface is the same: A polyline in, and geometry out

For example, we experimented with more applications of the system such as rebar layouts and massing generation:

Technology

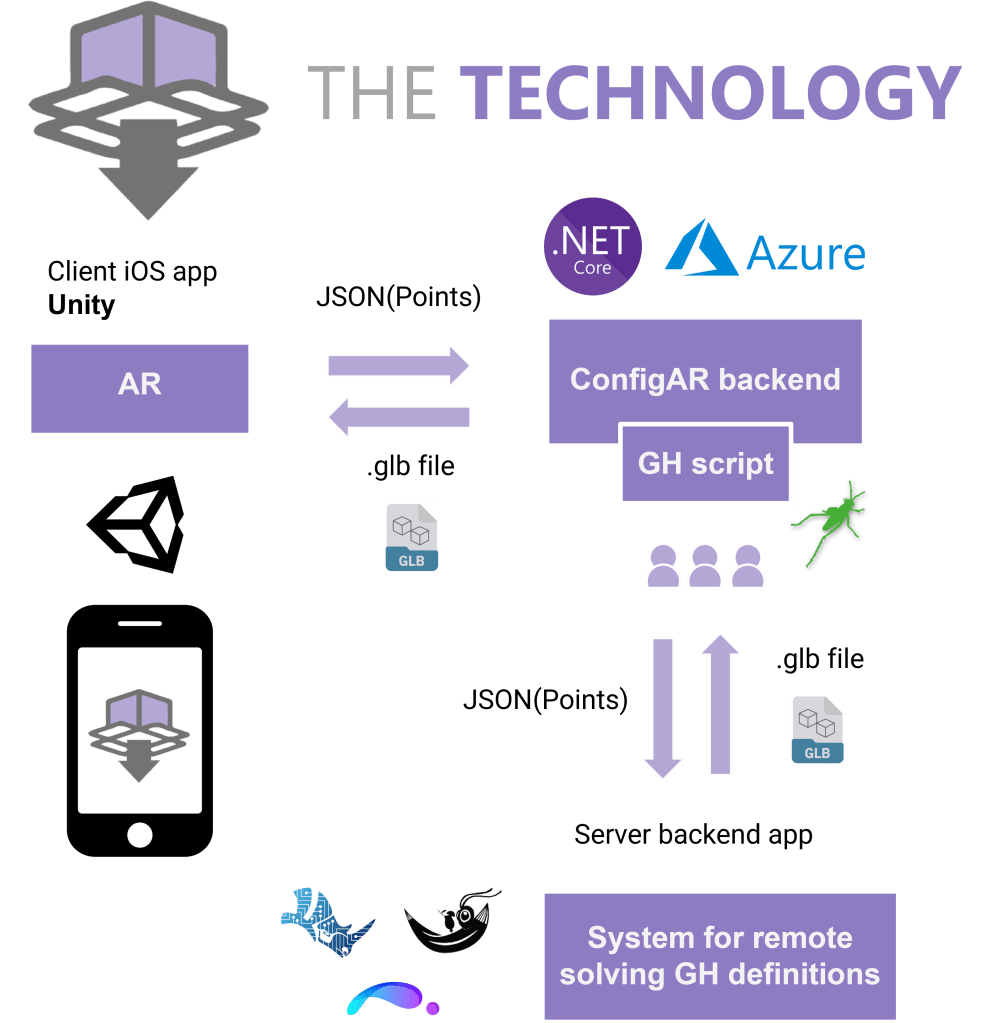

The configAR system is composed of three main components:

- An iOS app built with unity and C#. It uses the AR logic which enables the user to select points in the camera view.

- An ASP.NET core backend which orchestrates and manages the communication with the iOS app through a REST Web API. It creates an abstraction layer between the client and the GH solver (see below). For the hackathon, we deployed this API on an azure linux server.

- A backend worker capable of solving Grasshopper definitions. This can be rhino.compute, resthopper, or ShapeDiver. For the hackathon we used ShapeDiver.

The technology behind configAR

The iOS app collects the coordinates for the polyline and passes it to the backend as a JSON. In the JSON, we also specify which GH definition that should be used. As described above, this can be any GH definition as long as it follows the interface. The backend delegates the request to a GH remote solver, and back comes geometry as a .glb file.

This project is open source and can be accessed following the links below. Please excuse the general chaos of the code. It is a hackathon project after all 🙂

https://github.com/EmilPoulsen/ConfigAR.Backend

https://github.com/EmilPoulsen/ConfigAR.App

Future

The concept of hooking up AR with a Grasshopper powered backend is powerful in our opinion. This hack only scratches the surface of what’s possible. Imagine integrating the latest iOS RoomPlan, and have kitchen layout optimizations generated from Grasshopper visualized in AR 🤯

If we would’ve had more time at the hackathon there were certainly things we would’ve liked to improve. The UI is a bit technical, and the interoperability between the unity scene and the geometric content from Grasshopper wasn’t fully fleshed out.

Thanks everyone involved in AEC tech. It was a great success and can’t wait for next year’s event. Also, stay tuned for the video presentation which will be uploaded soon!

The team: Gokul Gupta | Luke Gehron | Ben Fortunato | Alice Huang | Elcin Ertugrul

Emil Poulsen | Atishay Lahri | Rolando Villena | Knut Tjensvoll | Val Tzvetkov